One-sample gaussianity test in admixture models using Bordes and Vandekerkhove estimation method

Source:R/gaussianity_test.R

gaussianity_test.RdPerform the hypothesis test to know whether the unknown mixture component is gaussian or not, knowing that the known one has support on the real line (R). The case of non-gaussian known component can be overcome thanks to the basic transformation by cdf. Recall that an admixture model has probability density function (pdf) l = p*f + (1-p)*g, where g is the known pdf and l is observed (others are unknown). Requires optimization (to estimate the unknown parameters) as defined by Bordes & Vandekerkhove (2010), which means that the unknown mixture component must have a symmetric density.

Usage

gaussianity_test(

sample1,

comp.dist,

comp.param,

K = 3,

lambda = 0.2,

conf.level = 0.95,

support = c("Real", "Integer", "Positive", "Bounded.continuous")

)Arguments

- sample1

Observed sample with mixture distribution given by l = p*f + (1-p)*g, where f and p are unknown and g is known.

- comp.dist

List with two elements corresponding to the component distributions involved in the admixture model. Unknown elements must be specified as 'NULL' objects. For instance if 'f' is unknown: list(f = NULL, g = 'norm').

- comp.param

List with two elements corresponding to the parameters of the component distributions, each element being a list itself. The names used in this list must correspond to the native R names for distributions. Unknown elements must be specified as 'NULL' objects (e.g. if 'f' is unknown: list(f=NULL, g=list(mean=0,sd=1)).

- K

Number of coefficients considered for the polynomial basis expansion.

- lambda

Rate at which the normalization factor is set in the penalization rule for model selection (in ]0,1/2[). See 'Details' below.

- conf.level

The confidence level, default to 95 percent. Equals 1-alpha, where alpha is the level of the test (type-I error).

- support

Support of the densities under consideration, useful to choose the polynomial orthonormal basis. One of 'Real', 'Integer', 'Positive', or 'Bounded.continuous'.

Value

A list of 6 elements, containing: 1) the rejection decision; 2) the p-value of the test; 3) the test statistic; 4) the variance-covariance matrix of the test statistic; 5) the selected rank for testing; and 6) a list of the estimates (unknown component weight 'p', shift location parameter 'mu' and standard deviation 's' of the symmetric unknown distribution).

Details

See the paper 'False Discovery Rate model Gaussianity test' (Pommeret & Vanderkerkhove, 2017).

Author

Xavier Milhaud xavier.milhaud.research@gmail.com

Examples

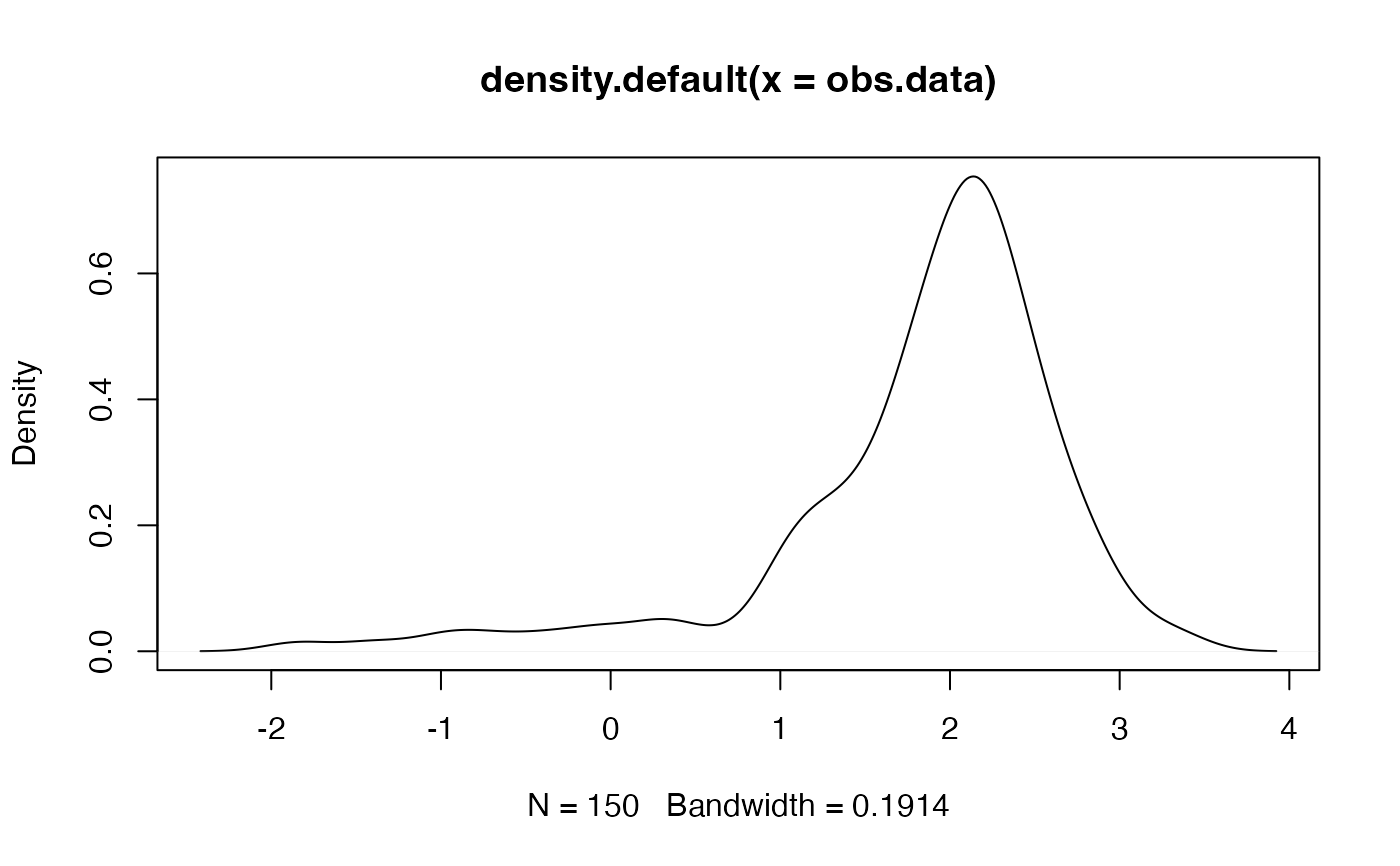

####### Under the null hypothesis H0.

## Parameters of the gaussian distribution to be tested:

list.comp <- list(f = "norm", g = "norm")

list.param <- list(f = c(mean = 2, sd = 0.5),

g = c(mean = 0, sd = 1))

## Simulate and plot the data at hand:

obs.data <- rsimmix(n = 150, unknownComp_weight = 0.9, comp.dist = list.comp,

comp.param = list.param)[['mixt.data']]

plot(density(obs.data))

## Performs the test:

list.comp <- list(f = NULL, g = "norm")

list.param <- list(f = NULL, g = c(mean = 0, sd = 1))

gaussianity_test(sample1 = obs.data, comp.dist = list.comp, comp.param = list.param,

K = 3, lambda = 0.1, conf.level = 0.95, support = 'Real')

#> Warning: Still needs to be implemented for cases where the unknown density mean is lower then the known density one!

#> Warning: Still needs to be implemented for cases where the unknown density mean is lower then the known density one!

#> $confidence_level

#> [1] 0.95

#>

#> $rejection_rule

#> [1] FALSE

#>

#> $p_value

#> [1] 0.5347312

#>

#> $test.stat

#> [1] 0.3853927

#>

#> $var.stat

#> [,1] [,2] [,3]

#> [1,] 0.2199311 NA NA

#> [2,] NA 1.089654 NA

#> [3,] NA NA 1.087517

#>

#> $rank

#> [1] 1

#>

#> $estimates

#> $estimates$p

#> [1] 0.8513844

#>

#> $estimates$mu

#> [1] 1.994872

#>

#> $estimates$s

#> [1] 0.5373499

#>

#>

## Performs the test:

list.comp <- list(f = NULL, g = "norm")

list.param <- list(f = NULL, g = c(mean = 0, sd = 1))

gaussianity_test(sample1 = obs.data, comp.dist = list.comp, comp.param = list.param,

K = 3, lambda = 0.1, conf.level = 0.95, support = 'Real')

#> Warning: Still needs to be implemented for cases where the unknown density mean is lower then the known density one!

#> Warning: Still needs to be implemented for cases where the unknown density mean is lower then the known density one!

#> $confidence_level

#> [1] 0.95

#>

#> $rejection_rule

#> [1] FALSE

#>

#> $p_value

#> [1] 0.5347312

#>

#> $test.stat

#> [1] 0.3853927

#>

#> $var.stat

#> [,1] [,2] [,3]

#> [1,] 0.2199311 NA NA

#> [2,] NA 1.089654 NA

#> [3,] NA NA 1.087517

#>

#> $rank

#> [1] 1

#>

#> $estimates

#> $estimates$p

#> [1] 0.8513844

#>

#> $estimates$mu

#> [1] 1.994872

#>

#> $estimates$s

#> [1] 0.5373499

#>

#>